Give Your Business a Smarter Voice

We build custom LLM applications that make your systems feel naturally intelligent.

Why LLMs Matter

Most organizations are surrounded by information but struggle to use it. Teams sift through emails, reports, messages, manuals, contracts, and knowledge bases that grow faster than anyone can keep up. The result is slow decisions, repeated work, and knowledge that disappears the moment someone leaves the company.

Large language models change that dynamic.

LLMs read and understand your information at a scale no human team can match. They summarize, interpret, search, draft, explain, classify, and connect ideas across your entire business. They take the friction out of communication and make complex tasks feel simple.

At Tech360, our LLM development services turn this capability into something practical. We design systems that behave like reliable teammates. They understand context, stay grounded in your data, and respond in a way that reflects your standards. We do not build experiments. We build tools that help people work faster and with greater clarity.

Whether you want to automate heavy documentation work, assist customers with accurate answers, or empower teams with instant knowledge retrieval, we help you turn language into a strategic advantage.

Your business already knows more than it can process. LLMs finally make that knowledge usable.

Our Full Suite of LLM Development Services

LLMs are not plug-and-play solutions. They require the right design, the right data, and the right guardrails. Our large language model development services focus on building systems that are dependable, efficient, and aligned with your business.

Here is how we help you put language intelligence to work:

LLM Strategy and Use Case Design

Large Language Model Development Services

Custom LLM Applications

Workflow Automation With LLMs

Integration and Deployment

LLM Governance and Safety

Why LLMs Matter More Than Ever

Businesses are facing an explosion of unstructured information.

Teams cannot keep up with the volume, and customers expect faster and more precise answers.

Without LLMs, organizations face:

- repeated manual work

- long response times

- knowledge trapped in documents

- inconsistent communication

- errors caused by overload

- rising operational costs

- weak internal visibility

LLMs do not replace people. They give people room to think by handling the reading, writing, and searching that consume most of the day.

Tech360’s Approach to LLM Development

Our approach is simple. Build LLMs that feel natural, safe, and genuinely useful.

Process:

We study how information moves through your organization. This helps us design systems that support your workflows instead of forcing new ones.

Technology:

We choose the right models, frameworks, retrieval layers, and deployment patterns based on fit, scalability, and long-term sustainability.

People:

We focus on adoption. Teams understand how the system works, where to rely on it, and how to get the best results from it.

The Tech360 Advantage

Organizations choose us because LLMs are not simple tools. They influence accuracy, communication, productivity, and trust. We build systems that respect those stakes.

Here is what sets Tech360 apart:

We do not chase trends. We build models that support decisions, eliminate repetitive tasks, and make information easier to use. Every design choice is tied to a real business goal.

Practical Models Built for Real Work

We understand how different sectors speak, document, and enforce precision: ✓ Finance requires accuracy. ✓ Healthcare requires compliance. ✓ Retail requires speed. ✓ Manufacturing requires clarity. …and so on. This experience helps us build Custom LLM Applications that feel native to your environment.

Industry Awareness

We design cloud-native frameworks that scale with your business. They support real-time reasoning, retrieval, secure data handling, and efficient inference. Your environment stays fast, predictable, and cost-effective.

Architecture That Lasts

An LLM is never finished. It must grow with your data, your policies, and your teams. We support you through training, evaluation, optimization, and version management.

Long-Term Partnership

LLMs should speak with your tone, values, and standards. We fine-tune behavior so the model feels like a trusted colleague rather than a generic chatbot.

A Model That Sounds Like You

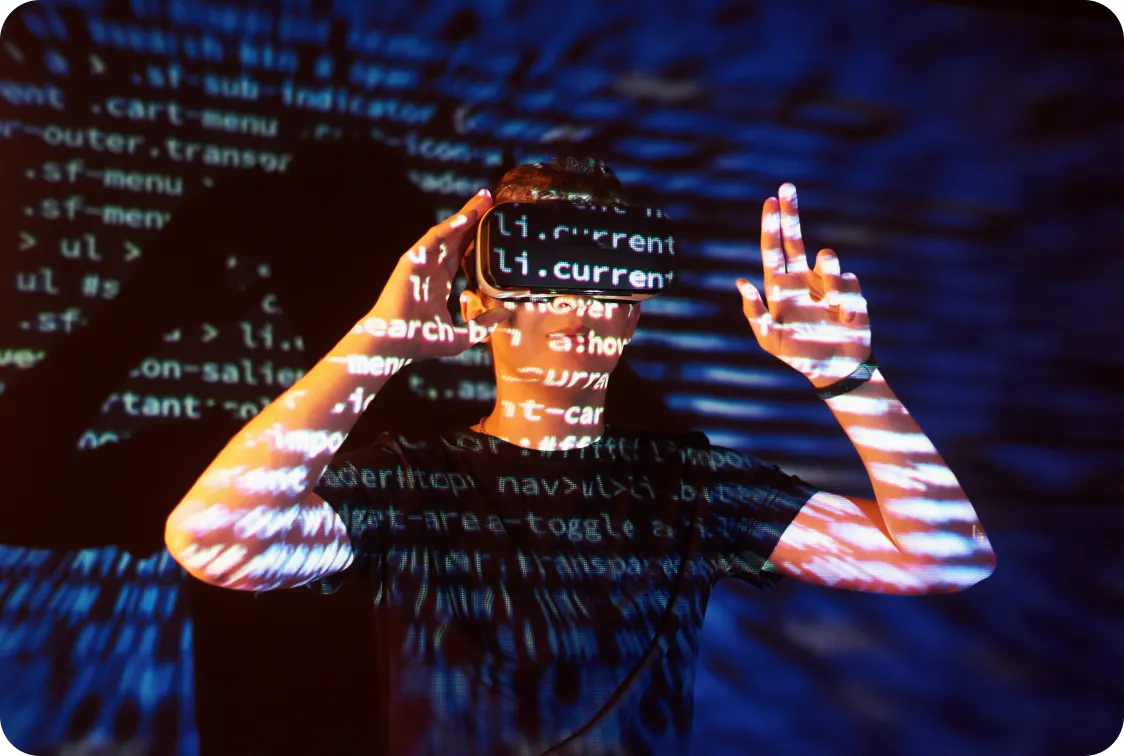

The Future of LLMs in 2025 and Beyond

Large language models are moving out of the experimental phase and becoming a core layer of enterprise intelligence. Over the next few years, the industry will shift from seeing LLMs as novelty tools to treating them as essential infrastructure. The transformation will not happen because of hype, but because organizations can no longer manage the complexity, volume, and velocity of their information without help.

The first major shift is the rise of real-time language intelligence. Traditional models rely on static training, but the next generation of LLMs will reference live data as naturally as a human consultant reviewing the latest reports. This will lead to assistants that know current policies, updated inventory, fresh customer conversations, and newly published documents. Decisions will become more grounded because the model is no longer relying on outdated snapshots.

Another important trend is the move toward smaller, domain-trained models that outperform their massive predecessors in accuracy and speed. Organizations want models fluent in their industry language, trained on focused datasets, and optimized for cost efficiency. These specialized LLMs will deliver sharper insights than broad, general-purpose models and will run smoothly even under heavy load. The industry is already shifting toward architectures that reduce memory requirements and decrease inference time without sacrificing quality.

Multi-modal intelligence will also reshape the landscape. Enterprises will expect models to interpret text, images, audio, video, diagrams, charts, and structured data in a single workflow. A quality issue on a manufacturing line, for example, might require the model to read a PDF report, analyze an image of a defective part, review an engineer’s notes, and generate a clear summary for leadership. The integration of formats will make LLMs more capable, more intuitive, and far more relevant to real-world operations.

Transparency is becoming a central requirement. Companies want to understand how an answer was generated, which information sources were referenced, and why certain conclusions were drawn. This is not only a governance concern. It is a usability concern. Teams are more likely to rely on an LLM when they can see the reasoning behind its output. As a result, models will include features that reveal citation paths, retrieval logs, and decision steps in plain language.

LLMs are also becoming deeply integrated into operational workflows. Rather than living inside a single interface, they will provide background intelligence across systems. A customer agent will receive suggested responses that reflect real company policy. A supply chain manager will receive clarifications based on real shipment data. A compliance team will gain automated interpretations of regulatory changes. The LLM will not be a separate tool. It will become part of the organization’s muscle memory.

Finally, governance will evolve from a set of after-the-fact controls to a proactive discipline. Organizations will need clear policies for data privacy, accuracy monitoring, output validation, and responsible use. They will expect partners like Tech360 to design LLM ecosystems that support transparency, safety, and predictable model behavior. Governance will not slow innovation. It will allow teams to innovate with confidence.

The future of LLMs is not defined by technology alone. It is defined by their ability to help people think, work, and decide with far less friction. That is where the real transformation will happen.

LLM Optimization Trends for 2026

As LLMs mature inside enterprises, the focus will shift from building them to refining them. Optimization will become the core differentiator between organizations that simply deploy language models and those that use them as a genuine advantage. The industry is beginning to recognize that the initial launch of an LLM is only the first step. The real work happens in the months and years that follow.

One of the most significant areas of optimization is continuous fine-tuning. Models must evolve alongside the business. New products, new policies, new terminology, and new market conditions all influence how the system should respond. Organizations will adopt structured processes that capture user feedback, identify weak points, and feed that information back into an automated fine-tuning cycle. The LLM becomes sharper not because of periodic overhauls, but because it learns through ongoing use.

Retrieval-augmented generation will become the foundation of enterprise reliability. While early LLM deployments depended heavily on pretraining, the future lies in grounding outputs in trusted internal data. Retrieval layers will gather verified content from internal knowledge bases, policy documents, case files, and operational systems before the model forms a response. This reduces hallucinations, improves accuracy, and ensures answers reflect current information. Companies will judge LLM quality not just by eloquence but by factual reliability.

Performance optimization will be another priority. Enterprises will look closely at latency, memory usage, model size, throughput, and cost per interaction. They will tune prompts, restructure context windows, introduce caching layers, and select right-sized models for different workloads. Instead of relying on a single large model for everything, organizations will adopt a portfolio of models that are each optimized for a specific purpose. This approach reduces cost while improving responsiveness.

Deployment will also become more flexible. Some organizations will run LLMs in the cloud for scalability, while others will use on-prem systems for security-sensitive workloads. Edge deployment will grow in importance where immediacy is critical, such as field operations or quality control stations. Hybrid deployments will become standard, allowing teams to balance privacy, speed, and reliability based on the demands of each use case.

Human oversight will remain essential. Even the most advanced models benefit from expert review, especially in high-stakes areas like legal interpretation, compliance, finance, and healthcare. Organizations will establish clear workflows where humans validate outputs, correct inaccuracies, and refine behavioral patterns. This human-in-the-loop structure will turn model improvement into an everyday practice rather than a periodic initiative.

The final optimization trend involves measurement. Enterprises will adopt new metrics that focus on practical outcomes rather than technical benchmarks. They will evaluate:

- How much time employees save

- How many errors the model prevents

- How quickly customers receive accurate answers

- How much knowledge becomes easier to find

- How clearly decisions are supported

These metrics will give organizations a better understanding of how LLMs influence real work. They will make optimization a business conversation rather than a technical one.

Partners like us, Tech360, are in a position to support this continuous evolution. Not by enforcing strict templates, but by helping organizations shape LLM ecosystems that stay relevant, dependable, and aligned with the pace of their industry. Optimization is the new frontier in LLM adoption, and the companies that embrace it will be the ones that stay ahead.

FAQs

Frequently Asked Questions

A large language model, or LLM, is an AI system trained to understand and generate natural language with a level of fluency that feels intuitive to humans. It can summarize documents, interpret questions, generate content, classify text, and connect ideas across massive volumes of information. For modern enterprises, this means employees can rely on a system that reads as widely and consistently as they do, but at a scale no team can match. Businesses use LLMs to reduce manual workload, improve accuracy in communication-heavy tasks, and unlock insights hidden in unstructured data. When implemented through well-designed LLM Development Services, these systems become practical tools that elevate decision-making and remove operational friction.

LLM Development Services cover the full lifecycle of building, refining, and deploying an enterprise-ready language model. This starts with identifying meaningful use cases, analyzing business workflows, and preparing the internal data that will shape the model's behavior. From there, teams build or fine-tune the model so it understands industry terminology, company policy, and contextual nuance. The service also includes integration work, retrieval design, evaluation, governance, optimization, and long-term maintenance. In other words, large language model development services create an intelligent system that fits naturally into your organization rather than forcing your organization to fit the model.

Custom LLM Applications allow language intelligence to show up exactly where work happens. Instead of relying on a single interface, organizations can build assistants for customer support, summarizers for long documents, analyzers for contracts, internal search tools, report generators, coaching systems, and workflow companions for analysts or managers. These applications are trained to understand the business context, the tone your organization expects, and the rules that govern decisions. They reduce repetitive reading and writing, increase precision, and help teams get answers without digging through countless documents. This turns everyday tasks into faster, clearer, and more confident work.

Modern large language model services are designed to integrate with CRMs, ERPs, ticketing systems, customer communication platforms, knowledge bases, document repositories, and internal operational tools. Integration is achieved through secure APIs and, when needed, retrieval pipelines that allow the model to reference your internal data. This ensures that every answer reflects real company knowledge rather than generic training. The integration process also includes access controls, audit logs, compliance checks, and validation workflows that fit into your existing governance structure. A well-integrated LLM becomes a natural extension of your systems, not an additional layer of complexity.

Accuracy comes from a combination of fine-tuning, retrieval, governance, and careful prompt design. Retrieval-augmented generation anchors the model in verified internal content, which significantly reduces hallucinations by ensuring the model references reliable sources. Regular fine-tuning keeps the model aligned with changing terminology, policies, and products. Governance frameworks establish rules for what the model can and cannot say, how sensitive information is handled, and how users can flag questionable outputs. Continuous monitoring allows teams to catch changes in performance early. When large language model development services are implemented well, hallucinations become rare, predictable, and manageable.

Training or fine-tuning an LLM requires access to the language your organization uses every day. This often includes policies, product documentation, FAQs, emails, tickets, transcripts, chat logs, knowledge base entries, support playbooks, reports, compliance guidelines, and operational manuals. The goal is not to collect every document ever written, but to curate the information that reflects how your teams work and communicate. The more relevant and consistent the data, the better the model will understand your domain. With strong data preparation and large language model services, you can shape a model that behaves like an experienced insider.

When implemented correctly, LLMs can operate with the same level of security as any mission-critical system. Enterprises have the option to deploy models within private cloud environments or on-prem infrastructure, which keeps data fully under organizational control. Access controls ensure that only authorized users can interact with the model. Audit logging provides visibility into usage patterns and data access. Retrieval pipelines can be restricted to approved sources. Governance layers prevent the model from generating sensitive or noncompliant output. With proper architecture and disciplined large language model development services, LLMs can meet even the strictest security and regulatory requirements.

LLMs do not replace people. They remove the repetitive, time-consuming tasks that distract people from the work that actually requires expertise. Instead of spending hours reading documents, searching for information, rewriting emails, or pulling together reports, employees can focus on analysis, creativity, judgment, and relationships. The model becomes a support system, not a substitute. Organizations that use Custom LLM Applications well often report that employees feel less overloaded, make decisions faster, and spend more time on work that brings real value. The goal is not replacement. It is amplification.

The timeline depends on the complexity of your use cases, the readiness of your data, and the level of customization required. A simple assistant built on top of an existing model with minimal fine-tuning can be deployed in a matter of weeks. A fully customized LLM with domain adaptation, retrieval systems, governance layers, and multiple integrations often takes several months. Large language model development services typically follow a clear path: discovery, data preparation, model design, evaluation, integration, and rollout. The goal is to move quickly, avoid unnecessary complexity, and deliver early wins that build momentum.

Companies choose Tech360 because they need more than a model. They need a partner who understands how information flows through an organization and how language influences decisions. Tech360 focuses on clarity, trust, practicality, and measurable impact. The team brings experience across industries, strong engineering discipline, and a deep understanding of how to design Custom LLM Applications that feel natural rather than experimental. Tech360 builds systems that are transparent, secure, and tuned to your real workflows. The goal is not to deliver a flashy demo, but to create a dependable intelligence layer that improves the way your organization thinks and works.

Success Stories

Success Beyond Code!

“From Sticky Notes to 100% Seamless Operations”

A regional retailer wanted to “go digital” but was drowning in legacy systems and paper-heavy processes. Tech360 stepped in with digital transformation services that modernized their operations end-to-end — cloud migration, workflow automation, and real-time analytics. Within 6 months, they cut manual tasks by 40%, launched an online storefront, and doubled customer engagement. The CEO put it best: “We used to survive on sticky notes and gut instinct. Now we actually know what’s happening, and customers notice.” Transformation doesn’t always start flashy; sometimes it’s just about finally getting the basics right.

“From Prototype Struggles to Market Success”

A fast-growing startup had an idea for a healthcare app but kept stalling after failed MVP attempts. Tech360’s product engineering services guided them from concept to launch: ideation, prototype, testing, and full-scale development. We built a secure, scalable app that integrated seamlessly with medical devices, all while meeting HIPAA standards. The result? A product that hit the market three months early and attracted a major investor round. That’s the power of structured software product engineering: clarity from day one.

“Turning Salesforce into a Sales Engine”

A mid-sized B2B company had Salesforce but treated it like an expensive Rolodex! Sales reps hated it, managers ignored it, and data lived everywhere but there. Tech360 brought in Salesforce development services and a certified team to customize workflows, integrate third-party systems, and build dashboards that actually answered business questions. Within weeks, sales adoption skyrocketed, reporting accuracy improved by 60%, and quarterly revenue jumped. The client admitted, “We finally feel like Salesforce is working for us, and not the other way around.”